One-Line Summary: Memory systems extend LLM agents beyond the context window by providing structured mechanisms for storing, retrieving, and managing information across interactions and sessions.

Prerequisites: Transformer architecture, attention mechanisms, embeddings and vector representations, RAG fundamentals

What Are Memory Systems for LLM Agents?

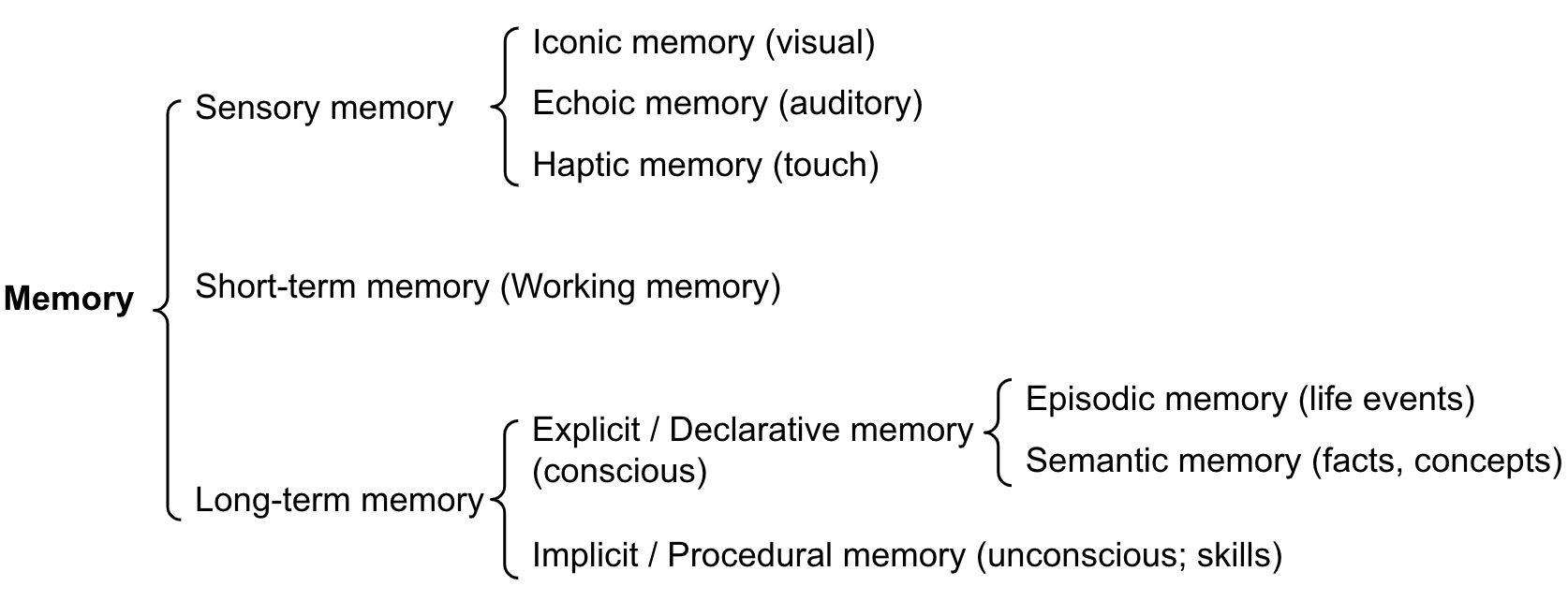

Consider how human memory works. Right now, you are holding the words of this sentence in sensory memory. The topic of this paragraph is in your short-term working memory. And your knowledge of what LLMs are comes from long-term memory, which itself breaks down into episodic memory (remembering when you first learned about transformers), semantic memory (knowing that attention is O(n^2)), and procedural memory (knowing how to write Python code). LLM memory systems borrow this cognitive science taxonomy to give agents persistent, structured access to information beyond what fits in a single prompt.

Source: Lilian Weng – LLM Powered Autonomous Agents

Source: Lilian Weng – LLM Powered Autonomous Agents

The fundamental constraint is the context window. Even with modern models supporting 128K-200K tokens, this is a finite working memory that gets wiped between sessions. Worse, Liu et al. (2023) demonstrated the "lost in the middle" problem: models perform well on information at the beginning and end of long contexts but degrade significantly on information placed in the middle. This means raw context stuffing is not a reliable memory strategy even when you stay within the window limit.

Memory systems solve this by creating external stores -- vector databases, knowledge graphs, summary buffers -- that the agent can read from and write to as needed. The agent's context window becomes like RAM in a computer: fast but limited, cleared on restart. External memory becomes like disk storage: larger, persistent across sessions, and accessed through explicit read/write operations. The art of memory system design is choosing what to keep in the context window (RAM), what to store externally (disk), and how to retrieve the right information at the right time with minimal latency and maximum relevance.

How It Works

flowchart TD

R1["MemGPT architecture"]

C2["MemGPT architecture"]

R1 --> C2

C3["hierarchical memory management"]

R1 --> C3

C4["main context and external storage"]

R1 --> C4Memory Types Mapped to LLM Architectures

| Cognitive Type | LLM Equivalent | Persistence | Access Pattern |

|---|---|---|---|

| Sensory | Raw token input | Momentary | Automatic |

| Working/Short-term | Context window | Per-session | Attention mechanism |

| Episodic (LT) | Conversation logs, vector store | Cross-session | Retrieval query |

| Semantic (LT) | Knowledge base, entity store | Permanent | Structured query |

| Procedural (LT) | Tool definitions, learned prompts | Permanent | Function invocation |

Storage Technologies

Vector Stores: The most common approach for semantic memory in LLM applications. Conversations and documents are split into chunks, embedded into dense vectors using an embedding model, and stored in specialized databases optimized for similarity search. At retrieval time, a query is embedded into the same vector space and the nearest neighbors are returned via approximate nearest neighbor (ANN) search algorithms. Popular options include:

- Pinecone: Managed cloud service, scales to billions of vectors, production-ready with built-in replication

- Weaviate: Open-source with native hybrid search (combining vector and keyword/BM25), GraphQL API

- Chroma: Lightweight and local-first, popular for prototyping and small-scale deployments, easy to embed in Python apps

- Qdrant: Rust-based for high performance, supports rich filtering alongside vector search, strong for production workloads

- pgvector: PostgreSQL extension, ideal for teams already using Postgres who want vector search without adding a new database to their stack

- FAISS: Facebook AI Similarity Search library for billion-scale indexes, not a database but an in-memory search index used as a building block

Knowledge Graphs: Store information as entity-relationship triples (subject, predicate, object). For example: (Alice, works_at, Acme Corp), (Acme Corp, industry, fintech), (Alice, founded, Startup_X). GraphRAG (Microsoft, 2024) builds a knowledge graph from documents automatically and uses community detection algorithms to create hierarchical summaries at multiple levels of abstraction. This excels at answering questions that require connecting multiple pieces of information ("What companies has Person X founded that operate in Sector Y?") where flat vector retrieval struggles because the answer requires traversing entity relationships rather than matching surface-level text similarity.

MemGPT: LLM as Operating System

MemGPT (Packer et al., arXiv:2310.08560) reimagines the LLM as an operating system where the context window is RAM and external storage is disk. The key innovation is self-directed memory management: the agent itself decides when to page information in and out of context, rather than relying on a fixed retrieval strategy.

MemGPT exposes memory functions the agent can call as tools:

core_memory_append: Add to persistent persona/user memory (always in context)core_memory_replace: Update existing core memory entriesarchival_memory_insert: Store information in long-term archival storagearchival_memory_search: Query the archival store with semantic searchconversation_search: Search past conversation history by keyword or meaning

This approach maintained coherent, personalized conversations across 20+ sessions in testing, far exceeding what naive context truncation could achieve. The agent remembered user preferences, ongoing projects, and prior discussion threads without any information being re-stated.

The MemGPT paradigm has influenced subsequent agent frameworks. The core idea -- giving the agent explicit control over its own memory operations rather than relying on fixed retrieval heuristics -- has become a design pattern adopted by multiple production agent systems.

Common Memory Patterns

- Buffer Memory: Store the full conversation history, truncating from the front when the context limit is reached. Simple to implement but loses important early context and instructions.

- Summary Memory: Periodically compress older conversation history into summaries using an LLM call. Preserves key information at the cost of detail and nuance.

- Entity Memory: Extract and maintain a running store of entities (people, projects, preferences, decisions) mentioned in conversation. Good for personalization and tracking evolving state over time.

- Hybrid Memory: Combine buffer (recent messages verbatim), summary (older history compressed), and entity extraction (key facts structured). This is the most robust approach for production systems and is what most deployed agents use. The tradeoff is implementation complexity -- maintaining three synchronized memory subsystems requires careful engineering.

Persistence Strategies

Memory persistence varies by use case and deployment context:

- Thread-based: Memory scoped to a single conversation thread, lost when the thread ends. Simplest to implement, suitable for stateless interactions like customer support tickets.

- User profiles: Persistent facts about each user (preferences, communication style, domain context, past decisions) that carry across all threads. Essential for personalized assistants.

- Checkpoints: Full agent state snapshots that can be restored, enabling time-travel debugging and branching workflows. Used in LangGraph and similar stateful agent frameworks.

- Knowledge base evolution: The agent's accumulated knowledge grows over time as it processes more information and interactions. Requires curation to avoid unbounded growth and retrieval noise.

The choice of persistence strategy depends on the application. A coding assistant benefits from user profiles (language preferences, project context) and knowledge base evolution (learned codebase patterns). A customer service bot may only need thread-based memory plus a shared FAQ knowledge base.

Why It Matters

- Session continuity: Users expect agents to remember prior conversations, decisions, and preferences. Without persistent memory, every interaction starts from zero, forcing users to repeat context and eroding trust.

- Personalization: Memory enables agents to learn user preferences, communication style, technical level, and domain context over time, dramatically improving the relevance and quality of responses.

- Knowledge accumulation: Agents can build up domain expertise across interactions, becoming more capable and contextually aware as they process more information from diverse sources.

- Context window efficiency: Rather than stuffing everything into the prompt and paying for massive input token counts, memory systems retrieve only the most relevant information, keeping token costs manageable at production scale.

- Complex task support: Long-running workflows (multi-week research projects, iterative software development, ongoing customer support cases) require memory that spans hours, days, or weeks of interaction without degradation.

Key Technical Details

- The "lost in the middle" effect shows 10-20% accuracy degradation for information placed at positions 40-60% through a long context versus the beginning or end

- Vector similarity search typically uses cosine similarity or dot product; HNSW (Hierarchical Navigable Small World) indexes enable sub-millisecond retrieval at million-document scale

- Embedding models for memory retrieval (e.g., text-embedding-3-small at 1536 dimensions) add approximately 0.1ms per embedding and cost about $0.02 per 1M tokens

- MemGPT's self-directed memory management adds 1-3 additional LLM calls per turn for the paging operations, increasing latency and cost

- Conversation summary compression ratios are typically 5:1 to 10:1 (10 messages compressed to 1-2 summary sentences)

- Knowledge graph construction from unstructured text achieves approximately 70-85% precision on entity-relation extraction depending on domain complexity

- Production systems typically combine vector stores (for semantic similarity) with keyword search (BM25) in a hybrid retrieval approach for better recall

- Reranking retrieved memories with a cross-encoder model improves precision by 10-25% over raw vector similarity scores

- Memory write operations should include metadata: timestamp, source agent, importance score, and topic tags to enable filtered retrieval

- For multi-user deployments, memory isolation (ensuring User A cannot retrieve User B's memories) is a critical security requirement

Common Misconceptions

- "Larger context windows eliminate the need for memory systems." Even with 200K token windows, the lost-in-the-middle problem, session persistence requirements, and cost considerations (paying for 200K input tokens every turn) make external memory essential for production agents. A 200K context costs 10-50x more per request than targeted retrieval.

- "Vector similarity always retrieves the most relevant memories." Embedding similarity can miss temporally relevant information ("what did we discuss yesterday?") or structurally relevant information (entity relationships). Hybrid approaches combining semantic, temporal, and structured retrieval consistently perform better than any single method.

- "Memory should store everything." Indiscriminate storage creates retrieval noise and degrades precision. Effective memory systems curate what gets stored using importance scoring, deduplication, and periodic consolidation -- much like human memory consolidation during sleep selectively strengthens important memories.

- "Embedding-based retrieval understands temporal queries." Standard vector embeddings encode semantic meaning, not time. A query like "what did we discuss last Tuesday?" requires temporal metadata filtering on top of semantic search. Without explicit timestamp handling, the system will return semantically similar content regardless of when it occurred.

Connections to Other Concepts

token-embeddings.md: The foundation for vector store memory -- text must be embedded into dense vectors for similarity-based retrieval to work.rag.md: Memory retrieval is a form of RAG where the document store is the agent's own interaction history and accumulated knowledge rather than a static corpus.self-reflection.md: Reflexion's episodic memory is a specific application of memory systems, storing verbal reflections across trials to improve future performance.transformer-architecture.md: The attention mechanism over the context window is the built-in "working memory" that external memory systems extend and augment.multi-agent-systems.md: Shared memory stores enable multiple agents to coordinate, share findings, and maintain consistent state across a collaborative workflow.attention-mechanism.md: Understanding how attention distributes weight across the context window explains why the "lost in the middle" problem occurs and why external memory is needed.prompt-engineering.md: Memory retrieval results must be formatted and injected into prompts effectively. The placement, formatting, and quantity of retrieved memories significantly impact generation quality.

Further Reading

- Packer et al., "MemGPT: Towards LLMs as Operating Systems," arXiv:2310.08560, 2023

- Liu et al., "Lost in the Middle: How Language Models Use Long Contexts," arXiv:2307.03172, 2023

- Microsoft Research, "GraphRAG: Unlocking LLM Discovery on Narrative Private Data," 2024

- Zhang et al., "A Survey on the Memory Mechanism of Large Language Model Based Agents," arXiv:2404.13501, 2024

- Park et al., "Generative Agents: Interactive Simulacra of Human Behavior," arXiv:2304.03442, 2023

- Lewis et al., "Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks," NeurIPS 2020

- Johnson et al., "Billion-Scale Similarity Search with GPUs" (FAISS), IEEE Big Data 2019