One-Line Summary: Long-term persistent memory enables agents to store and retrieve information across sessions using vector stores, databases, and files, overcoming the ephemeral nature of context windows through systems like MemGPT's hierarchical memory management.

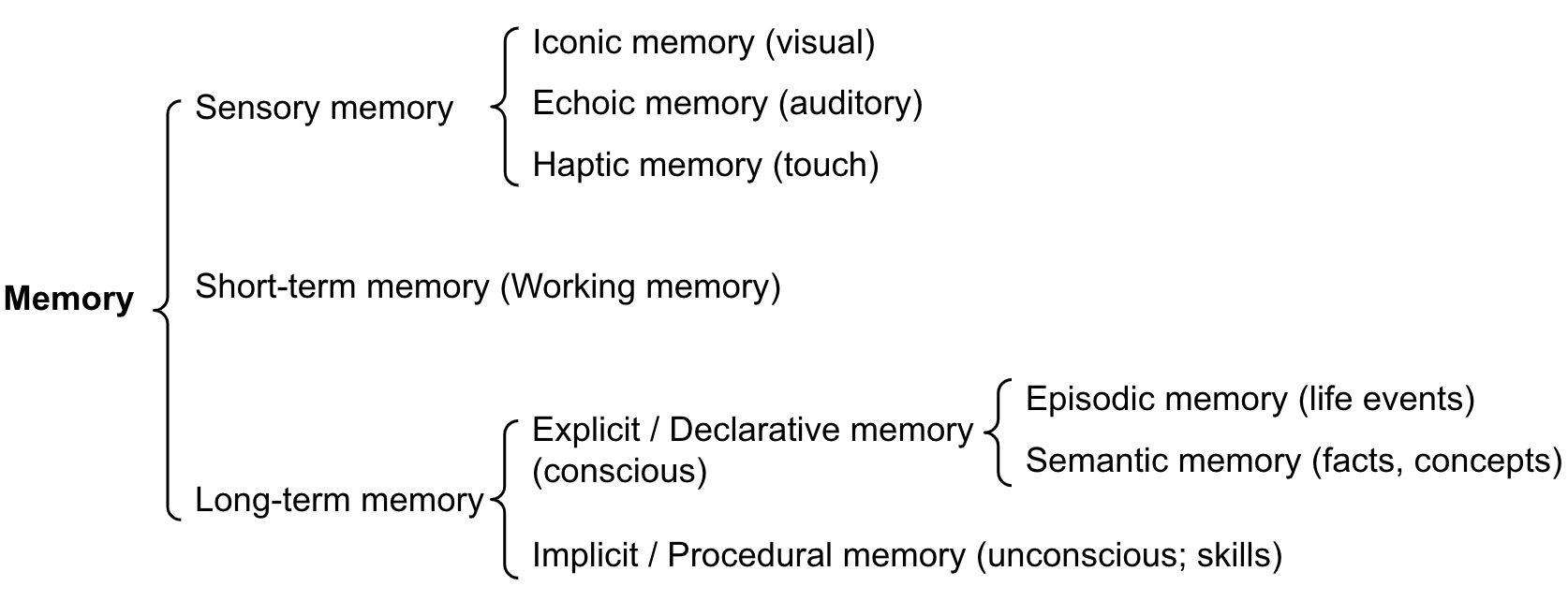

Prerequisites: Memory architecture overview, short-term context memory, embedding models, vector databases

What Is Long-Term Persistent Memory?

Imagine a researcher who keeps a meticulously organized filing cabinet. After each day of research, they file away their notes under categorized folders: "Methodology papers," "Experimental results," "Key contacts," "Open questions." Weeks later, when they need to recall a specific finding, they know exactly which folder to search. They do not remember every detail in their head (working memory), but they can reliably find and retrieve any piece of information when needed. The filing cabinet is their long-term memory: vast, persistent, and organized for retrieval.

For AI agents, long-term persistent memory is any storage mechanism that retains information beyond the current context window and across separate sessions. Without it, every conversation starts from scratch: the agent has no memory of past interactions, learned preferences, accumulated knowledge, or prior task outcomes. This is the default behavior of stateless LLM APIs: each request is independent, and the model has no built-in persistence. Long-term memory must be explicitly engineered.

Source: Lilian Weng, "LLM Powered Autonomous Agents" (2023) — Long-term memory (maximum inner product search over embeddings) persists information beyond the ephemeral context window.

Source: Lilian Weng, "LLM Powered Autonomous Agents" (2023) — Long-term memory (maximum inner product search over embeddings) persists information beyond the ephemeral context window.

The core challenge is not storage (disk space is cheap) but retrieval: given a current task or query, how does the agent find the specific memories that are relevant among potentially millions of stored items? This is fundamentally a search problem, and the quality of retrieval determines the usefulness of the entire memory system.

How It Works

Vector Store Memory

The most common implementation of long-term memory for agents uses vector embeddings:

- Encoding: Text (conversation turns, facts, observations) is converted to dense vector embeddings using an embedding model (OpenAI's text-embedding-3-large, Cohere's embed-v3, or open-source alternatives like E5, BGE)

- Storage: Embeddings are stored in a vector database (Pinecone, Weaviate, Chroma, pgvector, Qdrant, FAISS) along with the original text and metadata (timestamp, source, topic, importance score)

- Retrieval: When the agent needs to recall information, the current query is embedded and a nearest-neighbor search finds the most semantically similar stored memories

- Injection: Retrieved memories are inserted into the context window, typically in a dedicated "relevant memories" section

# Simplified vector memory flow

memory_text = "User prefers Python over JavaScript for backend development"

embedding = embed_model.encode(memory_text)

vector_store.upsert(id="mem_001", vector=embedding, metadata={

"text": memory_text,

"timestamp": "2024-03-15T10:30:00Z",

"topic": "user_preferences",

"importance": 0.8

})

# Later retrieval

query = "What language should I use for the API?"

query_embedding = embed_model.encode(query)

results = vector_store.query(query_embedding, top_k=5)

# Returns: ["User prefers Python over JavaScript for backend..."]Database-Backed Memory

For structured information (user profiles, task histories, factual knowledge), traditional databases are more appropriate than vector stores:

- Key-value stores (Redis, DynamoDB): Fast lookup of specific facts by key. "user_timezone" -> "America/New_York"

- Relational databases (PostgreSQL, SQLite): Complex queries over structured data. "SELECT * FROM tasks WHERE status='completed' AND topic LIKE '%machine learning%'"

- Document stores (MongoDB): Flexible schema for heterogeneous memory items

Database-backed memory excels at exact recall ("What is the user's timezone?") but does not support the fuzzy semantic search that vector stores provide ("What has the user said about their schedule preferences?").

File-Based Memory

Some agents persist memory as files:

- Markdown notes: The agent writes summaries of each session to a file, creating a growing knowledge base

- JSON state files: The agent serializes its state (current task, preferences, accumulated knowledge) to JSON at the end of each session and reloads it at the start of the next

- Structured logs: Timestamped records of actions, observations, and outcomes

File-based memory is simple to implement but lacks efficient retrieval: finding relevant information requires reading and parsing files, which scales poorly.

The MemGPT Approach: Hierarchical Memory Management

MemGPT (Packer et al., 2023) treats the LLM's context window as "main memory" and external storage as "disk," borrowing concepts from operating system virtual memory:

- Main context (RAM): The current context window, limited in size but immediately accessible

- Archival storage (Disk): Unlimited external storage (vector database), requiring explicit read/write operations

- Memory management functions: The agent has tool-like functions to

archival_memory_insert(text),archival_memory_search(query),core_memory_append(text), andcore_memory_replace(old, new) - Paging: When the main context fills up, older information is automatically "paged out" to archival storage, and relevant information is "paged in" when needed

This creates a tiered memory system where the agent can manage an effectively unlimited memory store while only keeping the most relevant information in its working context.

Why It Matters

Enables Cross-Session Continuity

Without persistent memory, every agent session starts with zero knowledge of the user, their preferences, or past work. Persistent memory enables: "Last week we started working on the database migration. You mentioned wanting to keep backward compatibility with the v2 API. Let me pick up where we left off."

Supports Long-Running Tasks

Tasks that span hours, days, or weeks cannot fit within a single context window. Persistent memory allows the agent to checkpoint its progress, store intermediate results, and resume later without losing work.

Enables Personalization and Adaptation

Over many interactions, the agent accumulates knowledge about the user: their coding style, preferred tools, communication preferences, common tasks. This accumulated profile enables increasingly personalized and efficient assistance.

Key Technical Details

- Embedding model dimensions: text-embedding-3-small: 1536d, text-embedding-3-large: 3072d, E5-large: 1024d. Higher dimensions improve retrieval accuracy at the cost of storage and compute

- Vector database scaling: FAISS handles millions of vectors on a single machine. Pinecone and Weaviate scale to billions with distributed architectures. For most agent use cases, thousands to millions of memories are sufficient

- Retrieval latency: In-memory vector search (FAISS): 1-10ms. Managed vector databases: 10-100ms. Acceptable for interactive use when retrieving 3-5 memories per agent step

- Memory chunk size: Individual memory items should be 100-500 tokens. Too short (single sentences) loses context; too long (full documents) reduces retrieval precision

- Metadata filtering: Combining vector similarity with metadata filters (e.g., "find memories about Python from the last 30 days") dramatically improves retrieval relevance

- Storage costs: Vector databases charge per vector stored and per query. At typical scales (10K-100K memories), costs are $5-50/month. Well within budget for most applications

- Deduplication: Without deduplication, the same information stored across multiple sessions creates redundancy that dilutes retrieval quality. Periodic deduplication (merging similar memories) maintains store quality

- Privacy considerations: Persistent memory stores user data across sessions. This requires clear data retention policies, user consent mechanisms, and deletion capabilities (right to be forgotten)

Common Misconceptions

-

"Vector search always finds the right memories." Vector similarity is approximate. A query about "Python performance" might retrieve memories about "Monty Python performance" due to surface-level similarity. Metadata filtering, reranking, and hybrid search (combining vector and keyword search) are necessary for reliable retrieval.

-

"More stored memories means a better memory system." Quality trumps quantity. A memory store with 1000 well-curated, deduplicated, high-relevance memories outperforms one with 100,000 noisy, redundant entries. Memory stores require maintenance.

-

"Long-term memory replaces the need for good context management." Long-term memory supplements context management; it does not replace it. Retrieved memories still consume context window space. The agent must still decide how much context budget to allocate to retrieved memories vs. conversation history vs. reasoning space.

-

"Embedding models understand meaning perfectly." Embeddings capture semantic similarity along the dimensions emphasized in training data. They can miss domain-specific nuances, struggle with negation, and fail to distinguish between related but different concepts. Retrieval should be treated as recall-optimized (finding candidates) with a reranking step for precision.

Connections to Other Concepts

memory-architecture-overview.md— Long-term persistent memory is one of the three layers in the memory architecture, serving as the "hard drive" that stores information beyond the context window's capacityshort-term-context-memory.md— Information flows between short-term (context window) and long-term (persistent storage) memory through encoding (storage) and retrieval (recall) operationsmemory-retrieval-strategies.md— The strategies for finding the right memories in the long-term store: recency, relevance, importance scoring, and hybrid approachesepisodic-memory.md— Episodic memory is a specific type of long-term memory focused on past experiences and interactions, often implemented on top of vector storesmemory-compression.md— Before storing information long-term, compression reduces redundancy and ensures storage quality; retrieved memories may also need decompression for full context

Further Reading

- Packer, C., Wooders, S., Lin, K., et al. (2023). "MemGPT: Towards LLMs as Operating Systems." Introduces hierarchical memory management with context window as main memory and vector stores as archival storage.

- Lewis, P., Perez, E., Piktus, A., et al. (2020). "Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks." Foundational paper on combining retrieval with generation, the basis for retrieval-augmented memory.

- Borgeaud, S., Mensch, A., Hoffmann, J., et al. (2022). "Improving Language Models by Retrieving from Trillions of Tokens." Demonstrates retrieval-augmented LLMs at scale, showing how external memory can dramatically improve model capabilities.

- Khattab, O., Santhanam, K., Li, X., et al. (2022). "Demonstrate-Search-Predict: Composing Retrieval and Language Models for Knowledge-Intensive NLP." Introduces DSP framework for composing retrieval with LLM reasoning.