One-Line Summary: Agent state management is the practice of tracking, organizing, and maintaining all information an agent needs across turns — including conversation history, working memory, task progress, and environmental snapshots — because the LLM itself retains nothing between calls.

Prerequisites: what-is-an-ai-agent.md, agent-loop.md, llm-as-reasoning-engine.md

What Is Agent State Management?

Picture a detective working a complex case over several weeks. Their desk is covered with organized materials: a case file with all evidence collected so far, a whiteboard with the current theory and connections, sticky notes with immediate next steps, and a filing cabinet with background research. Every morning, they review their desk to recall where they left off. Without this system, they would lose track of leads, forget critical evidence, and repeat work they have already done. An AI agent's state management serves exactly the same purpose — it is the agent's "working desk."

Agent state management exists because of a fundamental architectural fact: the LLM is stateless. Each time the agent loop calls the LLM, the model starts from scratch. It has no memory of previous calls. Everything the agent "remembers" must be explicitly represented in the context sent to the LLM on each call. This means the agent runtime is responsible for maintaining, organizing, and presenting all relevant state information so the LLM can make informed decisions.

State management encompasses several distinct types of information: the full conversation history (all messages and tool results), the agent's working memory (current understanding, hypotheses, plans), task progress tracking (what has been done, what remains), and environmental state (the current state of files, databases, and systems the agent has interacted with). How these types of state are stored, updated, and presented to the LLM directly impacts the agent's effectiveness.

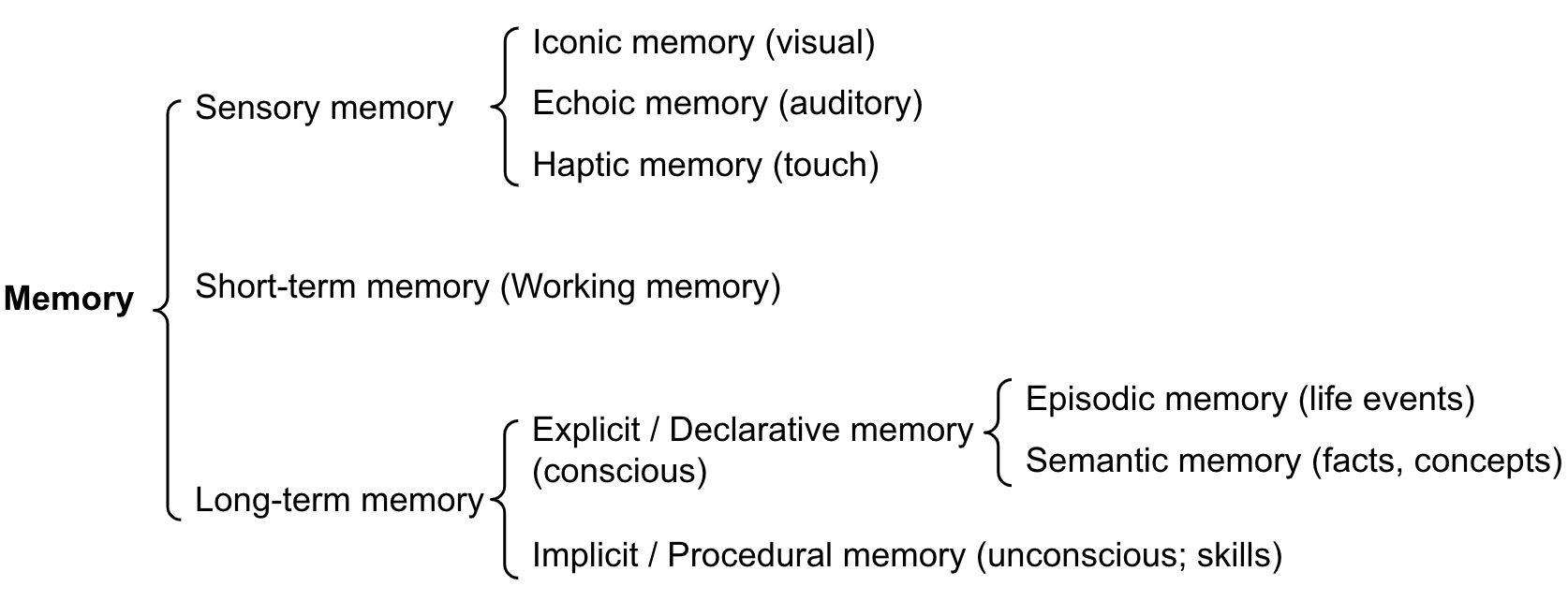

Source: Lilian Weng, "LLM Powered Autonomous Agents" (2023) — The memory diagram shows how different types of state (sensory, working, long-term) are organized and managed across the agent's lifetime.

Source: Lilian Weng, "LLM Powered Autonomous Agents" (2023) — The memory diagram shows how different types of state (sensory, working, long-term) are organized and managed across the agent's lifetime.

How It Works

Types of Agent State

Conversation History (Message Log) The primary state container is the ordered list of messages exchanged between the user, the LLM, and tools. Each message has a role (user, assistant, tool) and content. This history grows monotonically — every turn adds messages but none are removed (until context management intervenes). In a 30-turn task, the history might contain:

- 1 system message (2,000 tokens)

- 1-3 user messages (500-2,000 tokens)

- 30 assistant messages with tool calls (10,000-30,000 tokens)

- 30 tool result messages (10,000-50,000 tokens)

Total: 22,000-84,000 tokens of accumulated state.

Working Memory (Scratchpad) Some agent architectures provide the LLM with a scratchpad — a designated area where it can write notes, hypotheses, and intermediate conclusions. This is distinct from the conversation history because the agent explicitly manages its contents. Patterns include:

- Chain-of-thought in assistant messages: The LLM's reasoning text serves as implicit working memory visible in subsequent turns.

- Explicit scratchpad tool: A tool like

update_scratchpad(content)that lets the agent maintain a persistent note that is included in every subsequent context. - Structured state objects: A JSON object tracking key-value pairs the agent has determined (e.g.,

{"bug_location": "src/auth.py:142", "root_cause": "missing null check", "fix_approach": "add validation"}).

Task Progress Tracking what the agent has accomplished and what remains. This can be implicit (the LLM infers progress from the conversation history) or explicit (a task list that the agent updates). Explicit progress tracking reduces the risk of the agent repeating work or losing track of its position in a complex task.

Environmental State Snapshots Cached information about the environment: the current contents of files the agent has read, the results of recent commands, the structure of the project directory. These snapshots save the agent from redundantly re-reading files that have not changed and help it reason about the current state of the world.

Ephemeral vs. Persistent State

Ephemeral state exists only for the duration of a single task or session. The conversation history, working memory, and task progress are all ephemeral by default — they vanish when the session ends. Most agent interactions use only ephemeral state.

Persistent state survives across sessions. Examples include:

- User preferences: The agent remembers that this user prefers TypeScript over JavaScript, or pytest over unittest.

- Project context: The agent retains knowledge about the project's architecture, conventions, and common patterns across sessions.

- Learned patterns: The agent records successful approaches for types of tasks it encounters repeatedly.

Persistent state mechanisms include:

- Memory files: Files stored on disk (like

.claude/memories.md) that are loaded at session start. - Vector databases: Embeddings of past interactions that can be semantically retrieved.

- Structured profiles: JSON or YAML files storing user/project preferences.

Context Window Management Strategies

As state accumulates, it eventually threatens to exceed the context window. Management strategies include:

Truncation: Removing the oldest messages from the history, keeping the system prompt and recent turns. Simple but lossy — critical early information may be discarded.

Summarization: Periodically condensing older portions of the history into a summary. For example, replacing turns 1-20 (15,000 tokens) with a 500-token summary that captures key findings and decisions. More sophisticated but introduces summarization errors.

Sliding window with anchor points: Keeping the system prompt, the original user instruction, and the most recent N turns in full detail. Intermediate turns are either summarized or removed. The "anchor points" (system prompt + original instruction) prevent goal drift.

Selective retrieval: Instead of maintaining the full history in context, storing it externally and retrieving only relevant portions based on the current reasoning step. This is the approach used by MemGPT and similar architectures.

Tool result compression: Tool results are often the largest state contributors. A 2,000-line file read can be replaced with a summary or the specific relevant lines after the agent has processed it. Terminal output from test runs can be reduced to pass/fail plus failing test details.

State Consistency Challenges

Maintaining consistent state is difficult because:

- The environment changes independently: Other users may modify files, services may restart, data may be updated between agent turns.

- The agent's actions change the environment: After writing a file, the agent's cached version may differ from the disk version if another process modifies it.

- Summarization introduces distortion: Summarized state may omit details that become relevant later.

- State conflicts across branches: If the agent explores multiple approaches, state from abandoned branches may conflict with the current approach.

Production agents mitigate these issues by re-reading critical state before acting (verifying file contents before editing), timestamping state entries, and explicitly invalidating cached state when the environment changes.

Why It Matters

Coherence Over Long Tasks

Without effective state management, agents lose coherence after 10-15 turns. They forget what they have already tried, repeat failed approaches, contradict earlier decisions, or lose track of the overall goal. State management is what enables agents to maintain a consistent thread of work across 30, 50, or 100+ turns.

Token Efficiency

State management directly impacts cost. An agent that re-reads files unnecessarily or maintains verbose history wastes tokens. An agent with effective state compression achieves the same outcomes with 30-50% fewer total tokens. At scale, this translates to significant cost savings.

Debugging and Auditability

Well-managed state creates an audit trail. When an agent produces an incorrect result, developers can inspect the state at each turn to identify where the reasoning went wrong. This is essential for improving agent reliability and debugging production issues.

Key Technical Details

- State growth rate: Typical agents accumulate 1,500-3,000 tokens of state per loop iteration (assistant message + tool call + tool result). A 50-iteration task accumulates 75,000-150,000 tokens of raw state.

- Summarization compression ratio: LLM-based summarization typically achieves 5:1 to 15:1 compression. A 20,000-token history segment can be summarized to 1,500-4,000 tokens while retaining the key information.

- Context window utilization thresholds: Agent performance begins to degrade when context utilization exceeds 70-80% of the window. At 90%+, the LLM may truncate reasoning, miss instructions, or produce lower-quality output. Proactive context management should trigger at 60-70% utilization.

- Memory file formats: Claude Code uses markdown files (

.claude/CLAUDE.md, project-levelCLAUDE.md) for persistent state. These are loaded at session start and typically contain 200-2,000 tokens of project context, conventions, and preferences. - State serialization overhead: Converting structured state to/from text representation adds 10-20% token overhead compared to the raw information content. JSON is more structured but more verbose than natural language summaries.

- Working memory capacity: Analogous to human working memory limits, LLMs effectively track 5-9 distinct "items" in their reasoning. Beyond this, accuracy on any single item degrades. This limits the complexity of plans and state that the LLM can manage without external scaffolding.

- Re-reading vs. caching tradeoff: Re-reading a file costs 100-5,000 tokens per read. Caching it in context costs the same tokens but on every subsequent turn. For files referenced more than 3-4 times, caching in a summary is more efficient than re-reading.

Common Misconceptions

"The LLM remembers everything from previous turns." The LLM remembers nothing. Every piece of information it appears to "remember" is actually present in the message history sent with each API call. If information is not in the current context, it does not exist for the LLM.

"More state is always better — include everything." Including all state without filtering creates the "lost in the middle" problem: the LLM struggles to find relevant information among irrelevant details. Curated, relevant state outperforms exhaustive state. Quality over quantity applies to state as much as to any other input.

"Conversation history is the only state that matters." Conversation history is the most visible state, but not the only important one. Environmental state (what files look like now, not when they were last read), task progress (explicit tracking of completed vs. pending sub-goals), and working memory (current hypotheses and plans) all contribute to effective agent behavior.

"Persistent memory across sessions is straightforward." Persistent memory introduces significant challenges: what to remember (not everything is worth persisting), when to forget (outdated information can mislead), how to retrieve (semantic search vs. keyword vs. explicit recall), and how to avoid conflicts (memories from different contexts may contradict). Simple memory file approaches work for basic use cases; sophisticated retrieval-augmented memory requires significant engineering.

"State management is a solved problem — just use the full context window." Even with 200K-token windows, state management remains critical. A 200-iteration task generates more state than any current context window can hold. Additionally, reasoning quality degrades well before the window is full. Active state management is necessary regardless of window size.

Connections to Other Concepts

agent-loop.md— Each loop iteration both consumes and produces state. The loop and state management are tightly coupled.llm-as-reasoning-engine.md— The LLM's statelessness is the fundamental reason agent state management exists.environment-and-observations.md— Observations from the environment become part of the agent's state, and managing observation state is a major component of overall state management.goal-specification.md— The agent's understanding of the current goal and sub-goals is a critical state element that must be maintained and re-anchored throughout execution.determinism-vs-stochasticity.md— State management affects reproducibility: identical state should produce identical agent behavior (at temperature 0), but state differences across runs compound into divergent behavior.

Further Reading

- Packer et al., "MemGPT: Towards LLMs as Operating Systems" (2023) — Introduces virtual context management with explicit memory tiers (main context, archival memory, recall memory), inspired by operating system virtual memory.

- Park et al., "Generative Agents: Interactive Simulacra of Human Behavior" (2023) — Implements a memory architecture with observation, reflection, and planning streams for long-running agent simulations, demonstrating the importance of structured state.

- Wang et al., "Augmenting Language Models with Long-Term Memory" (2023) — Explores mechanisms for giving LLMs access to information beyond their context window through external memory retrieval.

- Zhong et al., "MemoryBank: Enhancing Large Language Models with Long-Term Memory" (2023) — Proposes a memory management system that stores, retrieves, and forgets information based on relevance and recency.

- Weng, Lilian, "LLM Powered Autonomous Agents" (2023) — Blog post providing a practical overview of agent memory architectures including short-term (context), long-term (vector store), and working memory (scratchpad).