One-Line Summary: System prompt instructions lose effectiveness over long conversations, typically degrading after 20-30 turns, requiring active reinforcement techniques to maintain consistent model behavior.

Prerequisites: 04-system-prompts-and-instruction-design/system-prompt-anatomy.md, 01-foundations/context-window-mechanics.md

What Is Multi-Turn Instruction Persistence?

Imagine a factory floor where safety rules are posted on a sign at the entrance. On the first day, every worker reads the sign carefully and follows every rule. By the second week, some rules start to slip. By the end of the month, the sign is background noise -- visible but no longer actively attended to. This is why effective factories repeat safety rules at the start of every shift, not just once at hiring. Multi-turn instruction persistence is the same problem applied to LLMs: the system prompt is read once at the beginning of the conversation, but as the conversation grows longer, the model's effective attention to those instructions diminishes.

This phenomenon is a direct consequence of how transformer attention mechanisms work. The system prompt tokens are at the very beginning of the context window. As conversation turns accumulate, the distance between the system prompt and the model's current generation point grows. While the system prompt remains in the context window, the model's practical attention to distant tokens decreases, especially for instructions that are not reinforced by the conversational content. The result is instruction drift: the model gradually deviates from its system prompt directives, becoming more responsive to recent user messages and less adherent to original constraints.

Instruction persistence is one of the most underappreciated challenges in production LLM applications. Developers who test their system prompts with 2-3 turn conversations may be satisfied with compliance, only to discover that the same prompt produces dramatically different behavior at turn 30 or 50. Understanding and mitigating instruction decay is essential for any application with extended conversations.

flowchart LR

S1["graph showing instruction compliance (y-ax"]

S2["over conversation turns (x-axis, 0-50)"]

S3["ay curves for stylistic instructions (fast"]

S4["and hard safety rules (slowest decay)"]

S1 --> S2

S2 --> S3

S3 --> S4Source: Adapted from findings in Xu et al., "Stress Testing LLM Instruction Following over Conversation Turns" (2024) and Liu et al., "Lost in the Middle" (2024)

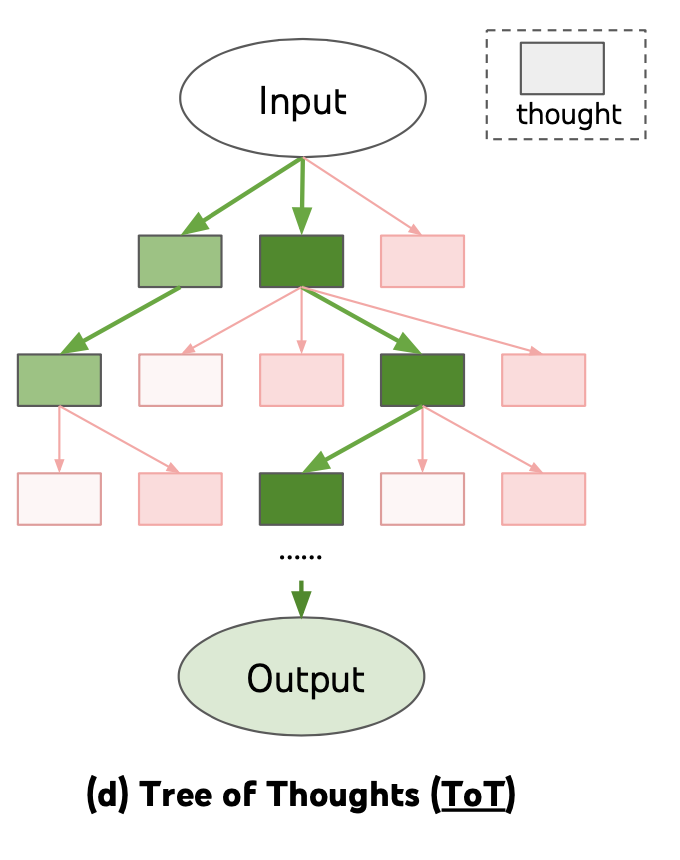

Source: Lilian Weng, "Prompt Engineering," lilianweng.github.io (2023) -- illustrates how structured prompting approaches help maintain instruction coherence across extended interactions

Source: Lilian Weng, "Prompt Engineering," lilianweng.github.io (2023) -- illustrates how structured prompting approaches help maintain instruction coherence across extended interactions

How It Works

The Decay Curve

Instruction adherence follows a characteristic decay pattern. For the first 5-10 turns, compliance is high (85-95% of instructions followed). Between turns 10-20, a gradual decline begins, with stylistic and tone instructions degrading first.

By turns 20-30, behavioral constraints start to weaken, and the model may violate rules it followed perfectly in early turns. Beyond 30 turns, significant drift is common -- the model may adopt tones, reveal information, or perform actions that the system prompt explicitly prohibits.

The exact curve depends on the model, the system prompt's strength, and the content of the conversation. Conversations that actively engage with constrained topics cause faster decay than neutral conversations.

Periodic Re-Injection

The most direct mitigation is to periodically re-inject critical instructions into the conversation. This can be done by appending key rules to the system prompt at regular intervals (e.g., every 10 turns) or by inserting a "system reminder" message into the conversation history.

The reminder does not need to repeat the entire system prompt -- the 3-5 most critical constraints are usually sufficient. This technique effectively resets the decay clock for the re-injected instructions.

Implementation options include a timer-based approach (re-inject every N turns), a trigger-based approach (re-inject when the conversation touches on constrained topics), or a hybrid that combines both.

Anchor Instructions

Anchor instructions are phrases embedded in the system prompt that instruct the model to self-reinforce: "Before each response, silently review your core instructions: [list of 3-5 key rules]."

This technique leverages the model's instruction-following capability to create a self-sustaining reinforcement loop. The model generates an internal review of its constraints, which places those constraints in recent context, which improves adherence.

Anchor instructions are not perfectly reliable -- the model may stop performing the self-review over time -- but they provide a lightweight persistence mechanism that does not require external re-injection infrastructure.

Summarization with Rule Preservation

For very long conversations, context window limits may force conversation summarization. When summarizing, it is critical to preserve system prompt rules within the summary.

A summarization prompt should explicitly instruct: "Summarize the conversation so far. You MUST preserve the following rules in your summary: [list of key constraints]."

Without this explicit instruction, the summarization may discard the operational constraints, and the model will proceed without them. The summarization step is one of the highest-risk moments for instruction loss because the model is making decisions about what to keep and what to discard.

Why It Matters

Production Reliability

Any application with conversations longer than 10 turns -- customer service bots, tutoring systems, coding assistants, long-form writing tools -- must address instruction persistence. A chatbot that follows safety rules for the first 10 messages but violates them at message 25 has a ticking time bomb in production.

Safety and Compliance

In regulated industries, instruction decay can lead to compliance violations. A healthcare chatbot that stops including medical disclaimers after 20 turns, or a financial advisor that drops risk warnings in long conversations, creates legal and reputational risk. Persistence mechanisms are a safety requirement, not a nice-to-have.

User Experience Consistency

Users notice when the model's personality, tone, or capabilities shift mid-conversation. A writing assistant that starts formal and gradually becomes casual, or a customer service agent that starts helpful and gradually becomes terse, creates a disjointed experience. Persistence ensures the user experiences a consistent identity throughout the interaction.

Security Over Time

Instruction decay creates a gradually widening attack surface. A user (or automated attacker) who was rebuffed by a constraint in turn 5 may succeed with the same request in turn 30 as the constraint's influence weakens. Persistence mechanisms are therefore a security requirement for any application that handles sensitive operations or data.

Key Technical Details

- Decay onset: Significant instruction decay typically begins at 20-30 conversational turns, though stylistic instructions may decay as early as turn 10-15.

- Instruction priority in decay: Stylistic and tone instructions decay first, followed by output format rules, then behavioral constraints, then hard safety rules (which are reinforced by model training and resist longer).

- Re-injection frequency: Re-injecting key instructions every 10-15 turns maintains compliance above 85% for most applications.

- Re-injection cost: A 3-5 rule reminder consumes approximately 50-150 tokens per injection, a minimal cost relative to the conversation context.

- Anchor instruction effectiveness: Self-reinforcement anchor instructions improve persistence by an estimated 5-15% compared to no persistence mechanism, but are less reliable than explicit re-injection.

- Summarization risk: Conversation summarization that does not explicitly preserve system prompt rules drops instruction adherence by 30-50% after the summary.

- Context window interaction: Instruction decay is worse when the context window is nearly full, because the model must compress more aggressively and system prompt tokens receive less relative attention.

- Monitoring approach: Implement periodic "canary checks" by injecting known test scenarios at intervals to measure whether the model still adheres to key constraints.

- User-triggered drift: Users who gradually shift the conversation toward constrained topics can accelerate decay faster than neutral conversations, because the user's messages create competing context that dilutes the constraints.

Common Misconceptions

-

"The system prompt is always in context, so the model always follows it." Being in context is necessary but not sufficient. The model's attention to distant tokens decreases with distance, and system prompt instructions compete with an increasing volume of conversation content for the model's effective attention budget.

-

"Instruction decay only happens with weak models." All transformer-based models exhibit attention decay with distance. Frontier models resist decay better due to stronger instruction tuning, but they are not immune. Even GPT-4 and Claude 3.5+ show measurable instruction drift in conversations exceeding 30 turns.

-

"You can solve persistence by making the system prompt longer." A longer system prompt provides more initial instruction coverage but does not prevent decay. In fact, a very long system prompt may exacerbate the problem by spreading instructions across more tokens, reducing the salience of any individual rule.

-

"Re-injecting the entire system prompt is the best approach." Re-injecting the full system prompt wastes tokens and can cause the model to generate meta-responses about receiving instructions. Targeted re-injection of the 3-5 most critical rules is more efficient and less disruptive.

-

"Users won't notice instruction drift." Users are often the first to notice drift because they experience the behavior change directly. A user who was refused a request in turn 5 may succeed with the same request in turn 30, which is both a usability inconsistency and a potential safety gap.

Connections to Other Concepts

04-system-prompts-and-instruction-design/system-prompt-anatomy.md-- Understanding which system prompt components are most susceptible to decay informs which components to prioritize for re-injection.04-system-prompts-and-instruction-design/behavioral-constraints-and-rules.md-- Behavioral constraints are the most important instructions to persist because their violation has the most severe consequences.04-system-prompts-and-instruction-design/instruction-hierarchy-design.md-- Hierarchy awareness itself decays over long conversations, making hierarchy reinforcement a specific instance of the persistence problem.04-system-prompts-and-instruction-design/dynamic-system-prompts.md-- Dynamic system prompts can implement persistence by re-assembling and re-injecting instructions based on conversation length.01-foundations/context-window-mechanics.md-- Context window limits force conversation compression, which creates opportunities for instruction loss.

Further Reading

- Liu, N. F., Lin, K., Hewitt, J., et al. (2024). "Lost in the Middle: How Language Models Use Long Contexts." TACL. Demonstrates that information in the middle of long contexts is accessed less reliably, providing the empirical basis for understanding instruction decay.

- Anthropic. (2024). "Long Context Prompting Tips." Anthropic Documentation. Practical guidance on maintaining instruction adherence in long-context conversations with Claude.

- Li, Y., Bubeck, S., Eldan, R., et al. (2023). "Textbooks Are All You Need II: phi-1.5 Technical Report." While focused on training, discusses the attention dynamics that underlie instruction persistence challenges.

- Xu, F., Shi, J., & Choi, E. (2024). "Stress Testing LLM Instruction Following over Conversation Turns." Empirical study specifically measuring instruction adherence degradation across conversation lengths, quantifying the decay curves for different instruction types.